Six rules for AI agents

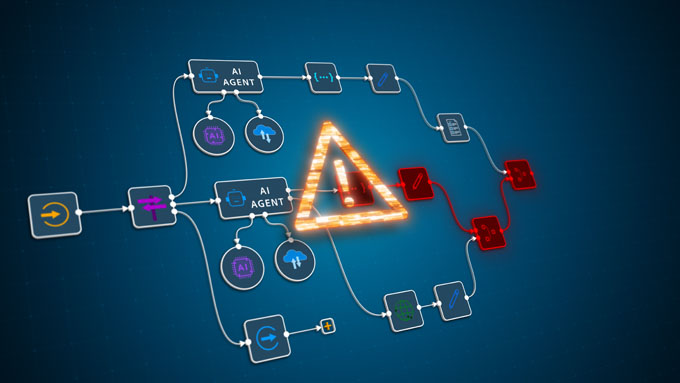

Artificial intelligence is becoming increasingly autonomous. Whether in production, logistics or internal processes: AI agents are increasingly making decisions that trigger specific actions. Clear rules are needed to avoid errors and misuse. Manufacturer Augmentir describes six key guidelines here.

AI agents are changing the way companies work. They take over routine tasks, analyze data and provide recommendations for action - often in real time. Analyst firm Gartner expects at least 15 percent of routine decisions in a company to be made autonomously by AI agents by 2028. However, the more important and widespread they become, the greater the risks. This is because small initial errors or non-transparent processes can quickly escalate into major damage if there is no corrective.

Augmentir knows these challenges first-hand. This is because the manufacturer of an AI-based platform for connected workers works closely with leading industrial companies worldwide. The company has therefore developed the «6 rules for AI agents»: practical guidelines that ensure that AI systems function transparently, responsibly and reliably. Those who adhere to these principles can exploit the full potential of the new technological possibilities without jeopardizing the trust of customers and employees.

1. transparency in execution

Every activity of an AI agent must be traceable: What instructions were given? Which tools were used? What results were achieved? - Only if all steps are clearly documented can its decisions be checked and understood. AI must therefore never be a «black box». Transparency creates trust and commitment.

2. clear lines of responsibility and accountability

Every AI agent needs an authority that stands up for the technology's decisions. This task can either be performed by a human or an organizational unit. Their responsibilities and duties must be clearly defined. Only then is it clear who is in control at all times. As powerful as AI is or will be in the future: Responsibility always lies with people, not machines.

3. disclosure of the AI origin

If an agent provides recommendations or decisions, it must clearly state that these originate from an AI. This requires the explicit statement: «Errors cannot be ruled out». This prevents excessive expectations as well as excessive dependence on AI. Human judgment thus remains the focus.

4. complete documentation of AI participation

It is not uncommon for AI-generated recommendations or content to be shared on other platforms - for example in Microsoft Teams or internal portals. Even then, the information on the AI source and corresponding disclaimers must not fall by the wayside. All information must be passed on together with the content, regardless of where it is shared. Cross-platform transparency is the be-all and end-all.

5 The more impact, the more human control

AI can and should make recommendations. However, as soon as measures have a real impact, a human must review and approve the decision. Examples of this include a qualitative defect in a product or the reporting of a safety incident at a plant. In short: AI may provide support. However, where its actions lead to significant economic or personal consequences, human supervision is required.

6. no generative AI in case of danger to life and limb

There are clear no-goes wherever people could come to harm. As things stand today, generative AI agents should not intervene autonomously in safety-critical areas with potentially life-threatening consequences. These measures require a defined, verifiable procedure and strict safety protocols. Relying solely on the statistical probabilities of algorithms is not enough here. Such decisions must be reserved for experts.

Conclusion: For a responsible future

The conscientious use of artificial intelligence requires clear guidelines: transparency, human control and binding safety rules. Legislators have also recognized this and formulated binding minimum requirements for the use of AI with the EU AI Act. Those who firmly establish such requirements not only ensure compliance, but also strengthen the trust of employees, partners and customers. «We firmly believe that AI should complement human intelligence and not replace it. Binding rules are needed to ensure that this principle is upheld,» says Carsten Hunfeld, Director EMEA at Augmentir, adding: «This is the only way to use AI efficiently and with calculable risk, both now and in the future.»

Source: Augmentir